The traditional bottleneck in digital storytelling has long been the acquisition of bespoke soundtracks that perfectly align with a creator’s unique emotional cadence. While visual tools have become increasingly intuitive, high-end music composition remained a guarded craft, requiring either a massive licensing budget or years of technical training in sound engineering. Today, the introduction of a sophisticated AI Music Generator is fundamentally altering this power dynamic, shifting the focus from technical execution to pure creative direction.

In my observation, the most profound impact of this shift isn’t just the speed of production, but the elimination of “creative compromise.” Creators are no longer forced to settle for a stock track that is almost right; instead, they can define the exact atmospheric texture required for their narrative. By utilizing advanced neural networks, the process of scoring a project has transitioned from a manual labor of editing to a high-level dialogue between human intent and machine synthesis.

Architecting Emotional Resonance Through Advanced Generative Algorithms

The current generation of audio AI has moved far beyond simple pattern repetition. It now demonstrates an impressive grasp of harmonic theory and emotional color, allowing it to construct melodies that feel intentional rather than clinical. This evolution is particularly visible in how these systems handle complex transitions and instrumental layering, ensuring that the resulting audio maintains a coherent narrative flow from the opening bar to the final decay.

The Psychology Of Sound In Modern Digital Environments

Sound functions as the invisible architecture of user experience, influencing subconscious reactions in ways visuals often cannot. When a user inputs a specific mood into a generative engine, the system isn’t just pulling from a database; it is synthesizing a unique acoustic environment. In my experience, the ability to generate “tension” or “relief” on demand provides marketers and filmmakers with a precision tool for audience engagement that was previously inaccessible without a dedicated composer.

Redefining Artistic Ownership In The Age Of Automation

As generative tools become more integrated into professional workflows, the definition of an “artist” is expanding to include those who master the art of the prompt. This democratization of sound design allows a solo YouTuber or a small indie game developer to produce content with the sonic depth of a major studio. The technology acts as a force multiplier, taking a single creative spark and expanding it into a full-scale orchestral or electronic arrangement within seconds.

Evaluating The Performance Metrics Of Generative Music Platforms

To understand the practical utility of these tools, one must look at how they compare to traditional sound sourcing methods. The focus here is on the versatility of the output and the legal clarity of the resulting files, which are crucial for professional distribution.

| Evaluation Criteria | Library Based Sourcing | Generative AI Synthesis |

| Narrative Alignment | Static and often mismatched | Dynamic and purpose-built |

| Spectral Variety | Limited to existing recordings | Theoretically infinite variations |

| Workflow Integration | High friction (search and clip) | Low friction (describe and export) |

| Harmonic Complexity | Fixed at time of recording | Adjustable via iterative prompting |

The Operational Framework For Developing Unique Soundscapes

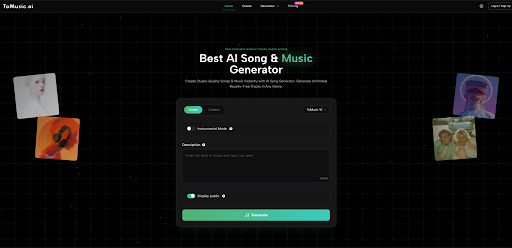

Navigating the ToMusic interface reveals a workflow designed for maximum output with minimal technical overhead. Based on the platform’s current architecture, the path from a silent timeline to a fully scored project is condensed into a highly logical three-step sequence that prioritizes user intent over manual knob-turning.

Synthesize The Auditory Blueprint Using Descriptive Prompts

The process begins by translating a visual or narrative concept into a textual description. This stage is where the user defines the genre, the primary instruments—such as a cello or a vintage synthesizer—and the overall energy level. For projects requiring a human touch, the platform also accepts lyrical inputs, which the AI then interprets to create a vocal performance that matches the specified musical style and rhythm.

Iterate And Refine Through Real Time Audio Previewing

Once the initial synthesis is complete, the user enters the refinement phase. The platform generates an audible preview, allowing for immediate assessment of the track’s suitability. It is important to realize that generative AI is a collaborative partner; if the first result feels too aggressive or perhaps too sparse, adjusting a few keywords in the prompt can redirect the model toward a more nuanced output that better fits the project’s specific requirements.

Execute The Final Export For Professional Distribution

The final step is the transition from the cloud-based environment to the user’s local production suite. The platform offers high-fidelity export options, ensuring that the generated music maintains its sonic integrity when played across different speaker systems or integrated into complex video projects. This streamlined export process ensures that the creative momentum isn’t lost to technical hurdles, making it an ideal solution for rapid-turnaround content environments.

Analytical Perspectives On The Current State Of Audio AI

While the advancement of AI-generated music is undeniable, a professional approach requires acknowledging the boundaries of the technology. In my testing, while the realism of the instruments is often startlingly high, the most successful results come from users who understand the relationship between prompt specificity and output quality. The technology is most effective when guided by a clear vision of the desired end-state.

Managing Expectations In High Complexity Musical Genres

Certain genres that rely heavily on extreme improvisational fluidity or very specific cultural micro-tonalities may still present challenges for general-purpose AI models. However, for the vast majority of commercial, cinematic, and personal projects, the stability of the output is more than sufficient for high-level use. The key is to view the generator as a powerful starting point—a “digital clay” that can be molded and refined to meet professional standards.

The Trajectory Of Personalized And Contextual Audio

Looking forward, the potential for this technology extends into the realm of truly adaptive audio. We are moving toward a future where music isn’t just a static file, but a living component of the media it accompanies. For now, the current generation of AI Music Generator tools provides the essential foundation for this revolution, giving every creator the keys to a world-class recording studio that lives entirely within their browser.

Optimizing Creative Outcomes Through Strategic Prompt Engineering

Success with generative audio is increasingly tied to the user’s ability to communicate effectively with the model. By experimenting with different atmospheric descriptors and structural cues, creators can unlock deeper layers of the AI’s capabilities. This evolution of the creative process emphasizes the human element of “curation,” ensuring that even in an automated world, the final emotional impact of a piece of music remains a distinctly human achievement.